AI Accelerates Milky Way Star Simulation by 113x

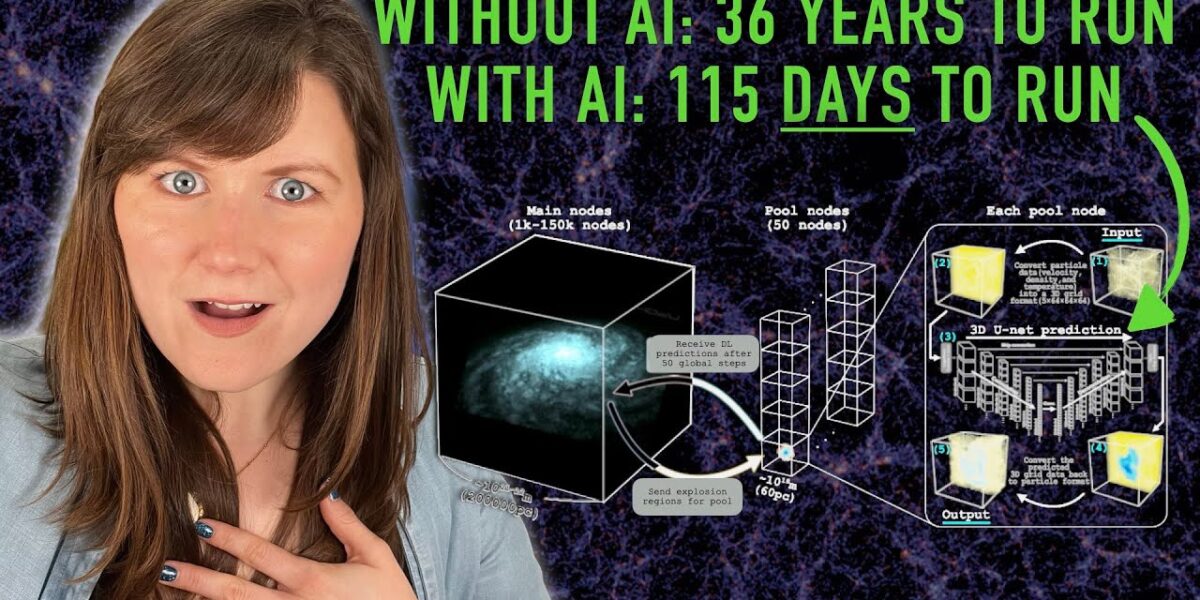

Researchers have developed an AI-powered simulation that models the Milky Way's 100 billion stars 113 times faster than previous methods. This breakthrough dramatically accelerates galactic simulations by using neural networks to predict supernova impacts, reducing a 36-year run time to just 115 days.

AI Accelerates Milky Way Star Simulation by 113x

Simulating the cosmos has long been the astrophysicist’s laboratory, a powerful tool to unravel the universe’s mysteries where direct experimentation is impossible. From the violent death throes of a single supernova to the grand ballet of galactic formation, complex computer models allow scientists to probe the fundamental forces of physics—gravity, magnetic fields, and particle interactions—over eons. However, the sheer scale of the universe, particularly our own Milky Way galaxy with its estimated 100 billion stars, presents a formidable computational hurdle. Now, a groundbreaking study by researchers in Hiroshima and their collaborators has unveiled a novel approach, leveraging artificial intelligence to dramatically accelerate these simulations, reducing a projected 36-year run time to a mere 115 days.

The Computational Conundrum of Galactic Scale

The challenge in simulating a galaxy like the Milky Way lies not just in the number of stars, but in the intricate web of interactions and the vast disparities in scale. Current simulations often simplify reality by representing clusters of stars as single particles. For instance, a simulation might use a billion particles, with each representing roughly 100 stars. While this conserves computational resources, it sacrifices the crucial details of individual stellar lifecycles and their localized impacts, which can propagate and influence the entire galactic structure over time.

The problem is compounded by several factors:

- Gravity’s Reach: Every particle in a simulation—whether a star, gas cloud, or dark matter component—exerts gravitational influence on every other particle. Doubling the number of particles doesn’t just double the calculations; it squares them, leading to an exponential increase in computational demand.

- Multi-Physics Complexity: Beyond gravity, simulations must account for magnetic fields, the thermodynamics of gas particles (heating and cooling), and the complex chemistry of stellar composition.

- Scale Disparities: The universe operates across immense ranges of distance, temperature, and time. A star system might span a fraction of a light-year, while the Milky Way itself is about 100,000 light-years across, embedded within a dark matter halo extending perhaps 600,000 light-years. Temperatures can range from 10 million degrees Celsius during a supernova to just 10 degrees above absolute zero in interstellar gas clouds.

- Temporal Dynamics: Stellar events like supernovae unfold rapidly over seconds or years, releasing immense energy and altering their surroundings. In contrast, a full rotation of the Milky Way takes hundreds of millions of years. Capturing both the fleeting intensity of a supernova and the slow evolution of the galaxy requires incredibly fine temporal resolution, demanding more computational steps and thus more time.

Supernovae: Small Events, Big Ripples

Supernovae, the explosive deaths of massive stars, are a prime example of small-scale events with galaxy-wide consequences. A single supernova, though minuscule on a galactic scale, releases an enormous amount of energy in a concentrated region. This blast can heat surrounding gas, trigger the formation of new stars, or even expel material from the galaxy. If simulations smooth over these events due to insufficient spatial or temporal resolution, they risk missing these critical ripple effects, leading to an incomplete understanding of galactic evolution.

Traditional methods to address this, such as ‘sub-grid models’ that refine resolution only in specific areas, still struggle with the time resolution problem. Processing smaller time steps means more frequent recording of particle states, which rapidly consumes computational resources. Even pooling the power of the world’s most advanced supercomputers doesn’t entirely solve the issue, as the communication overhead between processors can become slower than the calculations themselves.

AI as the Accelerator

Recognizing these limitations, the research team turned to machine learning, specifically a deep learning algorithm known as a neural network. Instead of attempting to simulate every supernova in real-time within the main galactic simulation, they adopted a novel strategy.

Their approach involved two key components:

- Training Data: They first ran numerous detailed, high-resolution simulations of individual supernovae using existing computational resources.

- AI Prediction Engine: A separate computational module, powered by a neural network, was trained on this extensive dataset of supernova simulations. This AI was tasked with learning the complex physics and outcomes of supernovae.

In the main galactic simulation, when a star reaches the end of its life and is about to go supernova, its properties are fed into the AI prediction engine. The AI then rapidly predicts the energy output, elemental distribution, and directional impact of that supernova on its immediate galactic environment. This predicted information is then fed back into the main simulation. Crucially, these AI predictions are made using much shorter time steps, running in parallel to the main simulation’s longer time steps. This allows the simulation to meticulously track the small-scale effects of supernovae without the prohibitive computational cost of simulating each event directly at every step of the galactic evolution.

The 113x Leap Forward

This AI-assisted method has yielded an astonishing 113-fold speed-up compared to previous state-of-the-art simulations. By offloading the computationally intensive task of modeling supernovae to a specialized, parallelized AI, the researchers can now envision running a full, star-by-star simulation of the Milky Way over at least a billion years in a matter of months, rather than decades. This breakthrough is not limited to astrophysics; any complex simulation involving small-scale events with large-scale ripple effects—such as climate modeling—could potentially benefit from this AI-driven acceleration technique.

What Comes Next?

The paper by Hiroshima and collaborators primarily focused on the computational methodology and demonstrated the dramatic reduction in simulation time—a proof of concept. The next critical step is to rigorously verify the astrophysical accuracy of the results produced by this AI-enhanced simulation. Scientists will need to compare the outputs with observational data and established astrophysical models to ensure that the AI’s predictive approach doesn’t introduce biases or lead to significantly different conclusions compared to direct, albeit slower, simulations.

If the results are validated, this approach promises to revolutionize our understanding of galactic evolution. It opens the door to exploring a wider range of initial conditions, testing more complex theories about galaxy formation and dynamics, and potentially uncovering subtle phenomena previously hidden by computational limitations. The ability to simulate our galaxy with unprecedented detail, particle by particle, star by star, marks a significant leap forward in our quest to comprehend the vast and intricate universe we inhabit.

Source: Simulating ALL 100 billion stars in the Milky Way for the first time (with the help of AI?!) (YouTube)