NVIDIA Unveils Quantum Leap in AI Computing

NVIDIA has unveiled its latest advancements in AI computing with the new Grace Hopper Superchip, designed to dramatically speed up AI model training and operations. This innovation promises to accelerate the development of large language models and bring us closer to advanced AI capabilities.

NVIDIA Unveils Quantum Leap in AI Computing

NVIDIA, a company known for powering the artificial intelligence revolution, has revealed significant advancements that could speed up AI development dramatically. At its recent ‘Quantum Day’ event, the company showcased new technologies focused on making AI models train and run much faster. This is a big deal for anyone working with or benefiting from advanced AI, like the large language models (LLMs) that power chatbots and other smart applications.

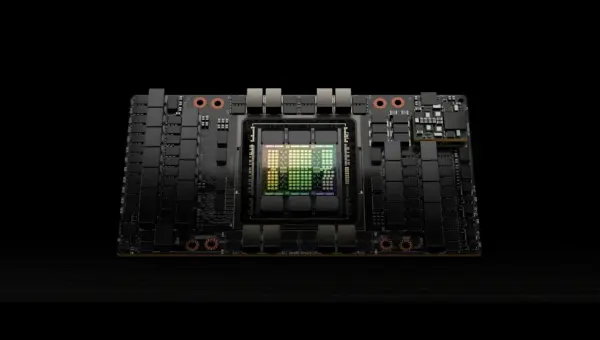

The core of NVIDIA’s announcement centers around their new Grace Hopper Superchip, specifically designed for AI and high-performance computing. Think of this as a super-powered engine for AI. It combines a powerful central processing unit (CPU) with a graphics processing unit (GPU) in a way that lets them work together incredibly smoothly. This close connection means data can move between the CPU and GPU almost instantly, which is crucial for handling the massive amounts of information AI models need.

Faster Training, Smarter Models

One of the biggest challenges in AI is training these complex models. It takes a lot of time and computing power. NVIDIA’s new Superchip aims to cut down that training time significantly. This means researchers and developers can experiment more, build better AI, and bring new applications to users faster than ever before. Imagine training an AI model that can understand and generate human-like text. With older technology, this might take weeks. With NVIDIA’s new hardware, that time could be reduced to days or even hours.

This speedup is achieved through several innovations. The Grace Hopper Superchip features a large amount of high-bandwidth memory. This is like having a much bigger and faster workspace for the AI to think in. It also uses advanced interconnects, which are like super-fast highways for data to travel between different parts of the chip. These improvements allow the CPU and GPU to share information without delay, preventing bottlenecks that slow down the AI’s thinking process.

What are LLMs and Gen AI?

To understand why this matters, let’s quickly define some terms. Large Language Models (LLMs) are AI systems trained on vast amounts of text data. They can understand, generate, and summarize human language. Examples include models like OpenAI’s GPT-4 or Google’s Gemini. Generative AI (Gen AI) refers to AI that can create new content, such as text, images, music, or code. LLMs are a type of Gen AI. The faster and more powerful the hardware, the more sophisticated and capable these AI models can become.

Bridging the Gap to AGI

NVIDIA also hinted at the future, touching upon Artificial General Intelligence (AGI). AGI is a theoretical type of AI that would possess human-like cognitive abilities, meaning it could understand, learn, and apply knowledge across a wide range of tasks, much like a human. While AGI is still a distant goal, advancements in computing power like those from NVIDIA are essential steps toward achieving it. The ability to train larger, more complex models faster is a key enabler for exploring these advanced AI frontiers.

Why This Matters

The implications of NVIDIA’s new hardware are far-reaching. For AI researchers, it means faster experimentation and the ability to tackle more complex problems. This could lead to breakthroughs in medicine, climate science, and countless other fields. For businesses, it translates to the development of more powerful AI tools and services, from smarter customer service bots to more advanced data analysis software. For everyday users, it means more capable AI applications that can assist with writing, coding, creative tasks, and more.

Faster AI development also means that the pace of innovation will likely accelerate. Companies like OpenAI, Google, and Anthropic, who are at the forefront of AI research, rely heavily on powerful hardware to train their cutting-edge models. Improved hardware from NVIDIA directly benefits these companies, allowing them to push the boundaries of what AI can do. Open-source AI projects also benefit, as more accessible and powerful hardware can democratize access to advanced AI development.

Availability and Future Outlook

NVIDIA’s Quantum Day event focused on the technological vision and the capabilities of their upcoming hardware. Specific details on pricing and widespread availability for the latest Grace Hopper Superchip configurations are typically announced closer to product launch dates. However, NVIDIA’s hardware has historically been a critical component for the AI industry, and these new developments signal a continued push towards more powerful and efficient AI computing. The company’s focus remains on providing the foundational technology that powers the next generation of artificial intelligence.

Source: NVIDIA's Quantum Day | here's a glimpse into the future… (YouTube)