Nvidia’s Vera Rubin Challenges AMD’s Memory Dominance

Nvidia's new Vera Rubin platform introduces a novel approach to memory architecture, challenging AMD's dominance in high-bandwidth memory for AI. The innovation aims to overcome key AI bottlenecks and could reshape the competitive landscape.

Nvidia’s Vera Rubin Platform Signals Strategic Shift, Potentially Undermining AMD’s Memory Advantage

Nvidia’s recent unveiling of its Vera Rubin platform at CES has sent ripples through the AI hardware market, with analysts suggesting that the company’s innovative approach to memory architecture could significantly challenge Advanced Micro Devices’ (AMD) long-standing strength in high-bandwidth memory (HBM) for AI applications. While the market’s initial reaction was muted, the underlying technological advancements in Vera Rubin appear poised to reshape the competitive landscape between Nvidia and AMD, impacting the future of their respective data center ecosystems.

Addressing AI’s Six Hard Limits

The development of Vera Rubin is framed by Nvidia as a direct response to six critical bottlenecks currently limiting the advancement of artificial intelligence. These challenges, as outlined by industry experts, include:

- Model Size: The exponential growth in AI model parameters, increasing by approximately 10 times annually, far outpaces the gains in traditional chip performance (around 30% per year).

- Token Count: Reasoning models are consuming significantly more tokens per prompt, with processing steps and verification potentially requiring ten times more tokens than the output length.

- Memory Bandwidth (GPU Level): The speed at which GPUs can access data from memory is becoming a primary constraint, leading to expensive GPUs sitting idle.

- Memory Bandwidth (System Level): Efficient data movement across thousands of GPUs in distributed systems is crucial, as overall performance is limited by the slowest component.

- Latency: The demand for near-instantaneous responses in real-time AI applications necessitates rapid processing, a challenge exacerbated by more complex reasoning tasks.

- Power, Cooling, and Grid Capacity: The immense power requirements of AI racks, projected to grow 20-30% annually, strain existing data center infrastructure, necessitating greater efficiency in tokens per watt and tokens per rack.

Nvidia’s Integrated Approach with Vera Rubin

Nvidia’s Vera Rubin platform represents a departure from incremental performance improvements, focusing instead on a co-designed, multi-chip strategy to tackle these AI limitations holistically. According to Nvidia executives, the platform delivers approximately 10 times more performance per watt compared to its predecessor, Blackwell, a significant leap beyond what traditional Moore’s Law advancements would offer. This performance boost is attributed not just to increased transistor counts but to a strategic distribution of workload across specialized chips.

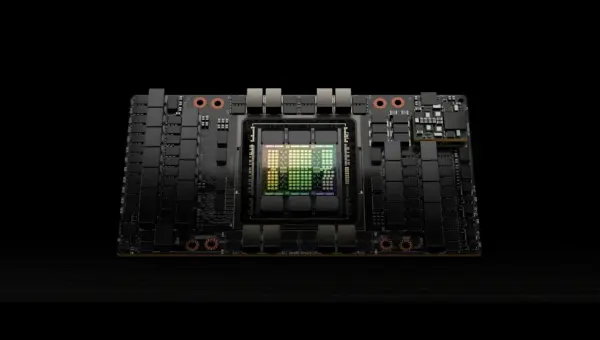

A key innovation within Vera Rubin is its approach to memory. While Blackwell offered 288 GB of HBM4 memory per GPU with around 22 terabytes per second of memory bandwidth, Vera Rubin enhances this significantly. A single Vera Rubin GPU boasts 50% more memory and nearly three times the memory bandwidth per GPU compared to Blackwell. To put this into perspective, the amount of data a single Vera Rubin GPU can process is so vast that it could theoretically scan Netflix’s entire video library in just over two minutes, limited only by memory bandwidth.

Challenging AMD’s Memory-Centric Strategy

This aggressive memory expansion by Nvidia directly confronts AMD’s core strategy. AMD has historically focused on providing GPUs with larger and denser memory capacities, such as in their MI455X Helios platform, which offers even more memory per GPU than Vera Rubin, albeit at slightly lower bandwidths. AMD’s philosophy centers on fitting large AI models onto fewer GPUs, thereby reducing networking costs and simplifying software deployment.

However, Nvidia’s Vera Rubin introduces a novel solution to the memory bottleneck by implementing a secondary, rack-level memory system for inference context. This system, dubbed Inference Context Memory Storage (ICMS), utilizes cheaper, lower-power solid-state drives managed by Data Processing Units (DPUs). ICMS stores static data like chat histories and reference documents, freeing up the GPU’s expensive HBM for active computation. This approach offers several advantages:

- Memory Augmentation: It effectively adds 15-30% more memory capacity to a rack by offloading static data from HBM.

- Power Efficiency: By using lower-power SSDs instead of HBM for context storage, it becomes approximately five times more power-efficient.

- Speed Enhancement: A shared, centralized memory pool reduces redundant data fetching and recomputation across GPUs, minimizing idle time and increasing processing speed.

Market Impact and Investor Considerations

The implications of Nvidia’s integrated hardware and software ecosystem, encompassing GPUs, CPUs, DPUs, and the CUDA software stack, are profound. This level of control allows Nvidia to implement such advanced, co-designed solutions. In contrast, AMD’s reliance on third-party networking solutions and a less integrated software environment like ROCm presents a potential disadvantage in rapidly evolving AI infrastructure.

Analysts predict that if current trends in model size and token count continue, AMD’s strategy of solely increasing HBM capacity on individual GPUs may face economic and physical limitations within the next one to two product cycles. The cost, power consumption, and supply constraints of HBM are significant factors. Nvidia’s ability to innovate at the system level, leveraging shared memory pools and specialized processing units, positions it favorably for future AI advancements.

While both Nvidia’s Vera Rubin and AMD’s Helios platforms are slated for commercial deployment in the latter half of 2026 and Q3 2026 respectively, the long-term trajectory suggests a potential shift in market dynamics. Developing a comparable rack-level memory solution could take AMD several years, requiring architectural decisions to be locked in as early as 2027 for a potential 2030 deployment. This timeline provides Nvidia with a significant lead in addressing the escalating demands of AI workloads.

What Investors Should Know

Investors monitoring the AI hardware sector should pay close attention to how memory architecture evolves. Nvidia’s Vera Rubin platform highlights a move towards system-level optimization, integrating various components to overcome fundamental AI limitations. AMD’s current strategy, while effective in the short term, may prove less scalable in the face of ever-increasing model complexity and data requirements. The ability of companies to co-design hardware and software, manage power efficiency, and innovate in memory solutions will be critical determinants of success in the ongoing AI revolution.

Source: Huge AI Memory Breakthrough & Warning for AMD Stock Holders (YouTube)