P vs NP: The $1M Computer Science Riddle Explained

The P vs NP problem, a $1 million challenge from the Clay Mathematics Institute, questions whether problems with easily verifiable solutions can also be quickly solved. This article breaks down the core concepts of P and NP classes, explains NP-complete problems, and explores the mind-bending implications if P were to equal NP.

The P vs NP Problem: A Million-Dollar Mystery in Computer Science

In the vast landscape of computer science, few questions loom as large or as enigmatic as the P versus NP problem. This unsolved conundrum, officially defined in 1971 by Steven Cook, poses a fundamental question about the nature of computation: If a solution to a problem can be verified quickly, can that solution also be found quickly? The Clay Mathematics Institute has placed a $1 million bounty on a definitive proof, highlighting its profound significance.

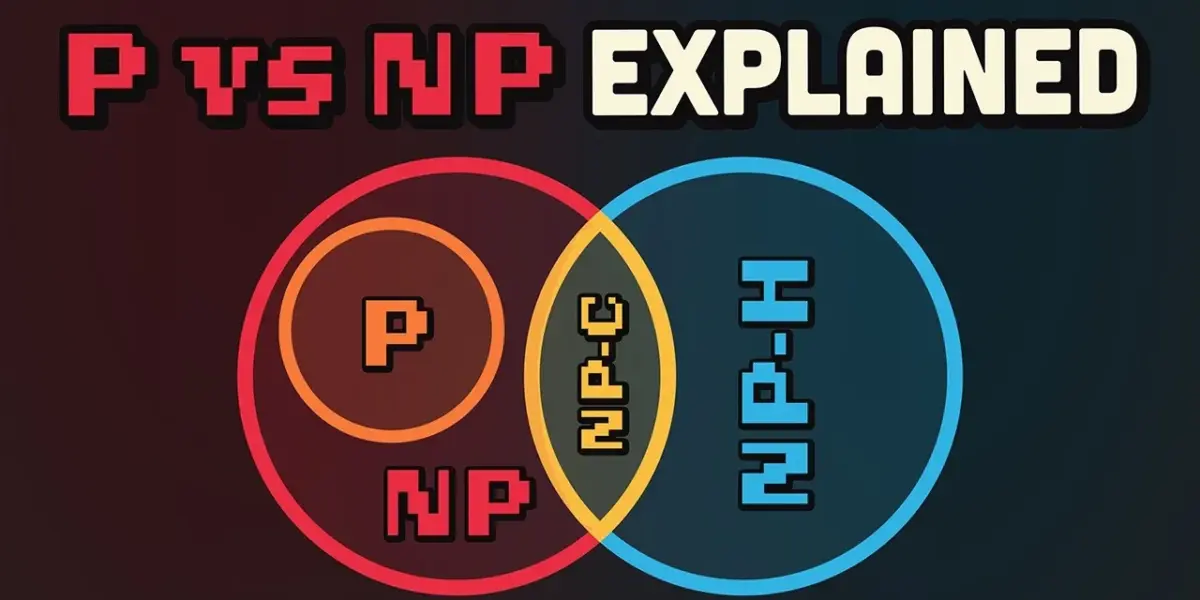

The core of the P vs NP debate lies in understanding two classes of computational problems: P and NP. Problems in class P, standing for polynomial time, are those that computers can solve efficiently. As the size of the input data increases, the time it takes to solve the problem grows at a predictable, manageable rate.

A common analogy is sorting a list of names alphabetically. While a larger list takes longer, the increase in time is proportional to the list’s size or its logarithm, making it a tractable problem for computers.

Understanding NP: Verification vs. Discovery

Class NP, or non-deterministic polynomial time, presents a different challenge. For problems in NP, a proposed solution can be verified quickly (in polynomial time), but finding that solution from scratch can be incredibly difficult, potentially taking an astronomically long time. Famous examples include the Traveling Salesman Problem, Sudoku puzzles, and complex scheduling tasks.

Consider the task of prime factorization. Multiplying two large prime numbers together is a relatively simple task, even for a computer. However, given a very large composite number, finding its prime factors is exponentially harder.

This difficulty is precisely what underpins modern public-key cryptography, such as RSA encryption. While multiplying primes is easy (P), factoring them back out is hard (believed to be not in P).

The Traveling Salesman Problem further illustrates this distinction. Given a list of cities and the distances between them, the goal is to find the shortest possible route that visits each city exactly once and returns to the origin city. If someone presents you with a proposed route, you can quickly calculate its total distance and verify if it’s a valid solution.

However, finding the absolute shortest route requires checking an enormous number of potential paths – an amount that grows factorially with the number of cities. For instance, with just 15 cities, the number of possible routes can reach billions.

In practice, when faced with NP problems, algorithms often employ heuristics or approximations. These methods aim to find a good-enough solution within a reasonable timeframe, rather than guaranteeing the absolute optimal answer, thereby trading optimality for computational feasibility.

NP-Complete Problems: The Hardest of the Hard

Within the NP class, there exists a special subset known as NP-complete problems. These are considered the most difficult problems in NP.

The defining characteristic of an NP-complete problem is that if a polynomial-time solution can be found for *any* single NP-complete problem, then it follows that *all* problems in NP can be solved in polynomial time. In essence, solving one unlocks the secrets to solving them all efficiently.

The first problem to be classified as NP-complete was the Boolean Satisfiability Problem (SAT). SAT involves determining if there exists an assignment of truth values (true or false) to variables in a complex logical expression that makes the entire expression true. Verifying a given assignment is straightforward, but finding such an assignment can require exploring a vast number of combinations.

Since SAT, numerous other critical problems have been identified as NP-complete, including variations of the Traveling Salesman Problem, Sudoku, circuit design, protein folding, and many other computationally intensive tasks vital to science and industry. The interconnectedness of these problems means a breakthrough in solving one would have cascading effects across many fields.

Why This Matters: The Profound Implications of P vs NP

The P vs NP problem is not merely an academic curiosity; its resolution carries immense real-world consequences. The outcome hinges on whether P equals NP or if P is strictly a subset of NP (meaning P does not equal NP).

If P = NP:

- Cryptography Collapse: Every encryption method, password protection, and cryptocurrency wallet that relies on the computational difficulty of problems like factoring large numbers would become instantly vulnerable. This would lead to a catastrophic breakdown of digital security.

- Unprecedented Problem Solving: Conversely, it would mean that many currently intractable problems in fields like medicine (e.g., drug discovery, protein folding), logistics, artificial intelligence, and operations research could be solved efficiently. Curing diseases, optimizing global supply chains, and solving complex optimization puzzles could become trivial.

- A Universe of Efficiency: Such a scenario suggests a universe that is fundamentally efficient, where every complex puzzle has a shortcut, perhaps hinting at a meticulously designed, computationally optimized reality.

If P ≠ NP:

- Enduring Security: Current cryptographic systems would remain secure, as the inherent difficulty of certain problems would be mathematically proven.

- Fundamental Limits: It implies that there are inherent computational limits in the universe. Some problems are fundamentally hard to solve, no matter how powerful our computers become. This could suggest a reality with built-in computational constraints, perhaps even supporting theories of simulated universes designed to conserve processing power.

- The Human Element: It reinforces the value of human ingenuity, intuition, and heuristic approaches in tackling complex problems where brute-force computation is infeasible.

The most philosophical, and perhaps unsettling, implication is that our very existence might be tied to computing the answer. The universe could be structured such that the solution to P vs NP is not found through traditional mathematical proof but by humanity itself acting as the computational agent.

The Search Continues

Despite decades of intense research and countless attempts by brilliant minds, a definitive proof for P vs NP remains elusive. The mathematical barriers are formidable, and no known algorithm can efficiently solve any NP-complete problem.

While the P vs NP problem remains unsolved, the quest for computational efficiency and powerful AI applications continues. Companies are constantly seeking to optimize their infrastructure to handle the demands of modern applications. Tools like MongoDB Atlas are emerging to address these challenges, offering a unified data platform designed for AI.

By consolidating data sources, including vector embeddings and metadata, and enabling querying across them, MongoDB Atlas aims to simplify the complex back-end infrastructure often required for AI development. This allows developers to focus on building features rather than wrestling with disparate systems, supporting real-time data processing crucial for responsive AI agents.

The $1 million prize from the Clay Mathematics Institute still awaits the individual or team that can crack the P vs NP problem. Until then, it remains one of computer science’s greatest unsolved mysteries, proof of the profound complexity and elegance of computation.

Source: The greatest unsolved problem in computer science… (YouTube)