Trump’s Disinformation Outrage Exposes Hypocrisy, Fuels AI Fears

Former President Trump's public condemnation of Iran's disinformation tactics is met with sharp criticism for its hypocrisy, given his own history of spreading falsehoods. The analysis delves into the rising threat of AI-generated disinformation in politics and its implications for democratic discourse.

Trump’s Disinformation Outrage Exposes Hypocrisy, Fuels AI Fears

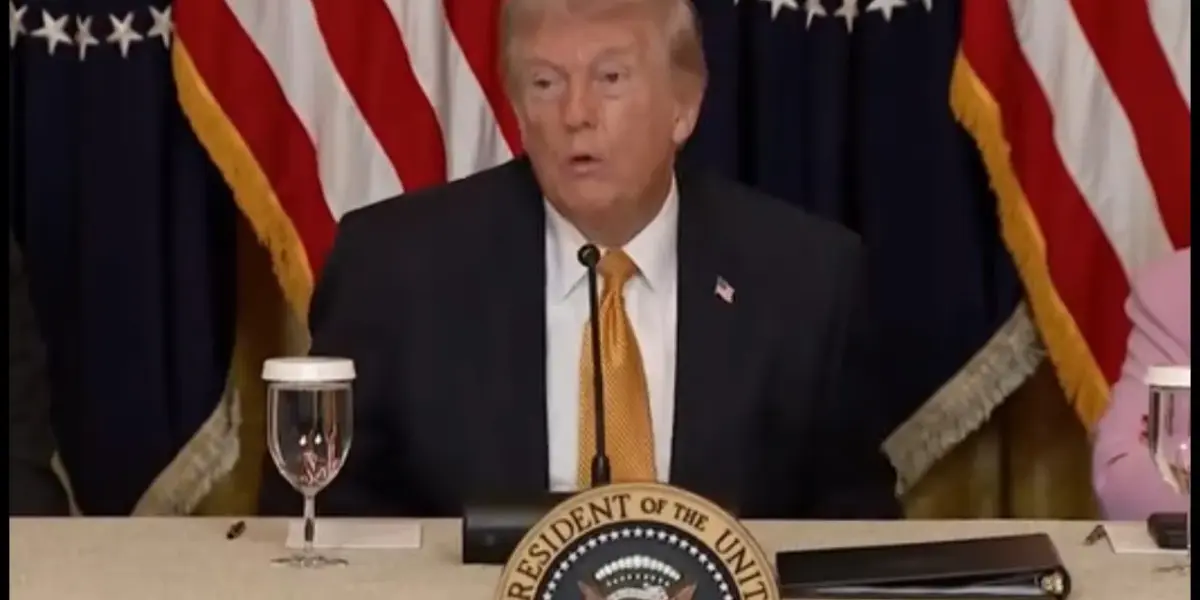

In a recent public appearance, former President Donald Trump expressed strong displeasure with the Iranian government’s alleged use of disinformation. He declared, “They’re a country based on disinformation,” and later, “And now they’re using disinformation plus AI. And that’s a terrible situation.” This condemnation, however, rings with a profound irony given Trump’s own well-documented history of disseminating false or misleading statements, a pattern that raises serious questions about his sincerity and the broader implications for political discourse in the age of artificial intelligence.

The Irony of Accusation

Trump’s outburst, particularly his surprise at discovering Iran’s reliance on disinformation, suggests a remarkable, perhaps performative, lack of awareness. The statement, “I didn’t know this until recently, they’re a country based on disinformation,” was met with incredulity, highlighting the disconnect between his pronouncements and the perceived reality of global politics. The transcript notes, “Whoa. A country based on disinformation. What a nightmare. I can’t imagine what it must be like to live under such a ruthlessly dishonest regime.” This sentiment, while seemingly directed at Iran, could easily be turned inward, given Trump’s own prolific use of what critics label as falsehoods.

The hypocrisy is further amplified by the context. Trump’s criticism of Iran came amidst discussions about a potential deal and the ongoing conflict, a setting where factual accuracy is paramount. Yet, the very same press conference saw him making dubious claims about his past predictions, including a widely debunked assertion that he foresaw the 9/11 attacks and advised that Osama bin Laden should be apprehended. A fact-check by Voice of America, a government-funded news agency, revealed that his book, “The America We Deserve,” merely mentioned Bin Laden as one of many threats without specific predictions or calls for his assassination. This pattern of embellishment and misrepresentation is a recurring theme, making his condemnation of another nation’s disinformation tactics appear disingenuous.

AI: The New Frontier of Deception

Trump’s commentary also touched upon the burgeoning role of artificial intelligence in spreading disinformation. He stated, “And now they’re using disinformation plus AI. And that’s a terrible situation.” He further articulated a narrow view of AI’s legitimate uses: “Artificial intelligence should be used for three things and three things only: Decoding animal communication, designing entirely new medicines that humans would never think to invent, and writing those two previous things I said when I asked AI what AI should be used for.” This highlights a fundamental misunderstanding, or perhaps a selective focus, on the capabilities and ethical challenges posed by AI.

The former president’s own actions underscore the peril of AI-generated content. He shared an AI-generated video depicting Barack Obama being arrested and promoted an AI-altered image related to his plan for Gaza. These instances demonstrate not only a willingness to leverage AI for political messaging but also a potential susceptibility to its deceptive power. The transcript points out the irony: “Maybe Trump isn’t pissed that Iran is using AI. He’s just pissed that there’s no financial grift attached.” Furthermore, his own interactions with military officials, such as questioning a general about a supposedly burning aircraft carrier based on fabricated imagery, reveal a concerning tendency to accept and amplify misinformation, even when factually incorrect information is readily available.

A Pattern of Misinformation

The phenomenon is not confined to Trump alone. The National Republican Senatorial Committee (NRSC) also released an ad featuring an AI-generated version of Democratic Senate hopeful James Telerico, illustrating a broader trend within political campaigns to employ synthetic media. This tactic, used to portray Telerico in a negative light, underscores the increasing reliance on fabricated content to shape public perception, especially in competitive races.

The underlying strategy, as suggested by the analysis, is rooted in a deliberate effort to create fear, chaos, and confusion. The transcript posits, “The Trump administration does not put a little spin on bad press or loosely try to reframe the narrative so that they’re seen in a more positive light. On a daily basis. They make a systematic effort to mislead voters by lying because they know that their team thrives under fear, chaos, and confusion.” This approach aims to deflect accountability for policy failures, such as rising gas and grocery prices or the expansion of military engagement, by blaming external factors like immigrants, Democrats, or DEI initiatives. The mention of the fabricated rumor about people eating dogs and cats during a migrant crisis exemplifies this tactic, where even outlandish claims are floated with a dismissive “whatever the case may be” to sow doubt and distrust.

Why This Matters

The implications of this pervasive disinformation, amplified by AI, are significant for democratic societies. When political figures, including former presidents, engage in or condone the spread of falsehoods, it erodes public trust in institutions, media, and the electoral process itself. The ease with which AI can generate convincing fake content poses an unprecedented challenge to discerning truth from fiction, potentially leading to manipulated public opinion and a fractured understanding of reality. The transcript’s closing remarks about the potential for administrations to lean on social media platforms to suppress critical coverage highlight the vulnerability of independent media and the importance of direct communication channels.

Historical Context and Future Outlook

The use of propaganda and disinformation is not new to politics. Throughout history, leaders have employed various methods to influence public opinion, from carefully crafted speeches to outright fabrications. However, the speed, scale, and sophistication offered by AI represent a quantum leap. The current landscape is a far cry from the era when a book or a newspaper article was the primary source of information, and fact-checking, while difficult, was more manageable. Today, a viral AI-generated video or deepfake can spread globally in minutes, making the task of correction and verification a constant uphill battle.

The future outlook is concerning. As AI technology advances, the ability to create hyper-realistic fake content will only improve, making it increasingly challenging for the average citizen to distinguish genuine from synthetic. This could lead to a perpetual state of skepticism, where even verified facts are dismissed as potential fabrications. The political strategy of thriving on chaos and confusion, as described in the transcript, is likely to become more entrenched, as it proves effective in mobilizing a base and obscuring accountability. The battle against disinformation will require a multi-pronged approach, involving technological solutions for detection, robust media literacy education, and a commitment from political actors to uphold factual integrity. Without such measures, the democratic process itself remains under threat.

Source: Confused Trump EMBARRASSES himself with OFF THE WALLS claim | Another Day (YouTube)