NVIDIA’s AI Unlocks True 3D Scene Reconstruction

NVIDIA's new PPISP technique dramatically improves 3D scene reconstruction by intelligently removing camera-induced distortions like incorrect exposure and color casts. This breakthrough promises more realistic virtual environments and advanced applications in gaming, film, and autonomous driving.

NVIDIA’s AI Unlocks True 3D Scene Reconstruction

In a significant leap forward for artificial intelligence and computer graphics, NVIDIA researchers have unveiled a new technique capable of generating remarkably realistic 3D scenes from a collection of 2D images. This breakthrough addresses long-standing challenges in 3D reconstruction, promising to revolutionize fields from virtual reality and gaming to autonomous driving and filmmaking.

The Problem with Traditional 3D Reconstruction

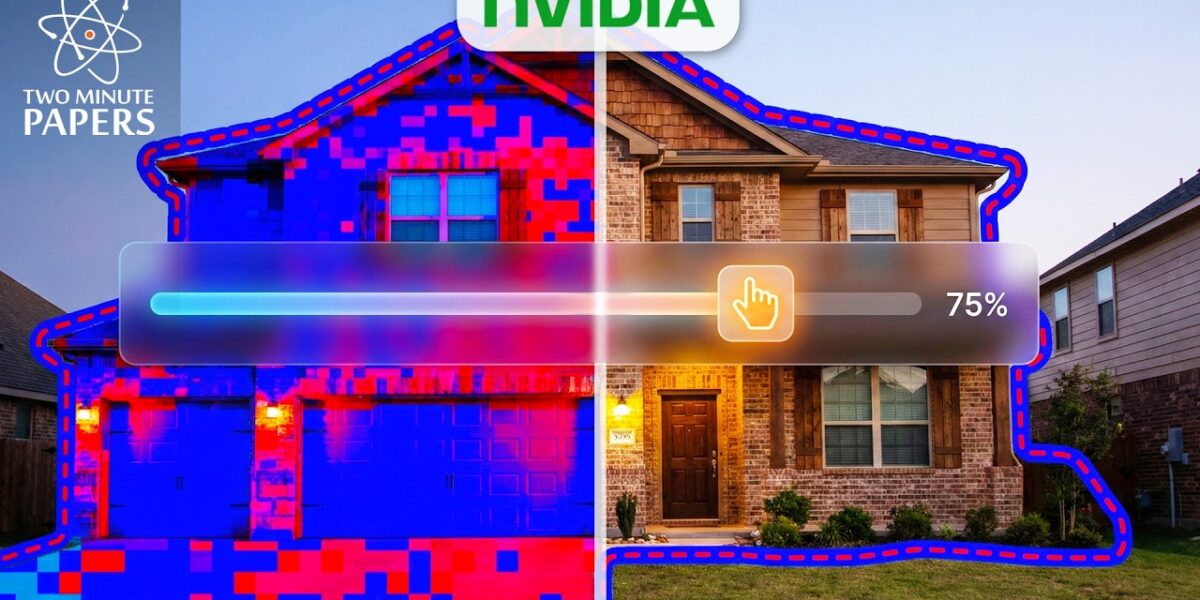

Creating accurate 3D models from photographs has historically been a complex endeavor. While techniques like Neural Radiance Fields (NeRF) have shown promise, they often struggle with inconsistencies introduced by varying lighting conditions, camera angles, and automatic camera settings. Imagine trying to build a 3D model of a house from photos taken on different days. One day it might appear blue, the next red, not because the house changed color, but because the camera’s automatic settings (like exposure and white balance) adjusted to the ambient light. This leads to artifacts like ‘floaters’ or ghostly imperfections in the final 3D model, as the AI tries to reconcile these visual discrepancies.

Previous methods often failed to account for these subtle but crucial variations. The AI would mistakenly interpret changes in lighting or camera exposure as actual changes in the object’s color or texture, resulting in blurry, inaccurate, and often unusable 3D representations. This limitation has hindered the widespread adoption of AI-powered 3D reconstruction for high-fidelity applications.

NVIDIA’s PPISP: A Master Detective for Reality

NVIDIA’s new technique, dubbed PPISP (Progressive Photorealistic Image Synthesis Pipeline), tackles this problem by acting like a detective. Instead of focusing solely on the final image, it analyzes the ‘sunglasses’ – the camera’s parameters and environmental influences – that alter the true appearance of the scene. PPISP intelligently separates the intrinsic properties of the scene (like its true color and geometry) from the extrinsic factors (like lighting, exposure, and lens effects).

The core innovation lies in PPISP’s ability to mathematically model and then remove these camera-induced distortions. It first identifies and corrects for exposure variations, then reverses the effects of white balance (essentially removing the ‘colored sunglasses’ effect). Crucially, it also learns and accounts for physical imperfections in camera lenses, such as vignetting (where image edges are darker than the center), and even the non-linear way digital sensors capture light (the camera response curve).

How PPISP Works: Deconstructing the Image

PPISP breaks down the reconstruction process into several key steps, each addressing a specific source of visual error:

- Exposure Offset: The AI determines the overall brightness of the scene captured in each image.

- White Balance Correction: It identifies and removes color casts caused by different lighting conditions, revealing the object’s true colors. This is analogous to removing the tint from different colored sunglasses.

- Vignetting Correction: The AI learns the specific darkening effect that occurs towards the edges of an image due to lens characteristics, effectively reverse-engineering the camera lens itself.

- Camera Response Curve Flattening: It accounts for the non-linear way digital sensors convert light into digital signals, ensuring a more accurate representation of brightness and color.

By solving these four ‘puzzles’ independently, PPISP can reconstruct a mathematically accurate representation of the reality that existed before the camera’s imperfections altered it. The result is a significantly cleaner and more photorealistic 3D scene, free from the ghostly artifacts that plagued previous methods.

Reinventing the Digital Camera’s Brain

Interestingly, the controller developed by NVIDIA to fix exposure for new views functions remarkably like the auto-exposure systems found in modern smartphones. Researchers have essentially recreated the ‘brain’ of a digital camera within a neural network, allowing for dynamic and accurate adjustments that mimic real-world photography while preserving scene integrity.

Limitations and Future Directions

While PPISP represents a major advancement, it is not without its limitations. The current model assumes that camera effects are globally consistent across the entire image. However, modern smartphone cameras often employ local tone mapping, where they selectively brighten or darken specific areas (like a face or a bright window) to improve the image. These spatially-adaptive effects can confuse PPISP’s global-rule-based algorithms, as they deviate from the expected physical equations.

The NVIDIA research team has made their work publicly available, a move lauded as a significant gift to the AI and computer graphics communities. This open approach encourages further development and exploration of these powerful 3D reconstruction capabilities.

Why This Matters: Real-World Impact

The implications of PPISP are far-reaching:

- Virtual and Augmented Reality: Developers can create more immersive and believable virtual environments, enhancing gaming and training simulations.

- Filmmaking and Special Effects: Realistic 3D asset creation becomes more accessible, streamlining visual effects workflows and enabling new cinematic possibilities.

- Autonomous Vehicles: More accurate 3D mapping of environments can improve the perception systems of self-driving cars, leading to safer navigation.

- Digital Archiving and Preservation: Historical sites, objects, and even events can be captured and preserved in highly detailed and accurate 3D models.

- E-commerce and Design: Products can be rendered in realistic 3D, allowing customers to view them from all angles before purchase.

By enabling the creation of high-fidelity 3D representations from readily available 2D images, NVIDIA’s PPISP is poised to unlock a new era of digital content creation and spatial computing.

Source: NVIDIA’s Insane AI Found The Math Of Reality (YouTube)