New Satellites Capture ‘Impossible’ Colors from Space

New hyperspectral satellites are capturing images with hundreds of color bands, revealing details invisible to the human eye and traditional cameras. This advanced technology builds on a 200-year legacy of spectral analysis, enabling unprecedented insights into Earth's resources and environment.

New Satellites Capture ‘Impossible’ Colors from Space

For decades, satellite imagery has offered humanity an unprecedented view of our planet. We’ve grown accustomed to seeing detailed pictures of Earth, often captured just hours after major events. But a new generation of satellites is taking this to a whole new level, revealing details in ‘impossible’ colors that were previously hidden from view.

Beyond Red, Green, and Blue

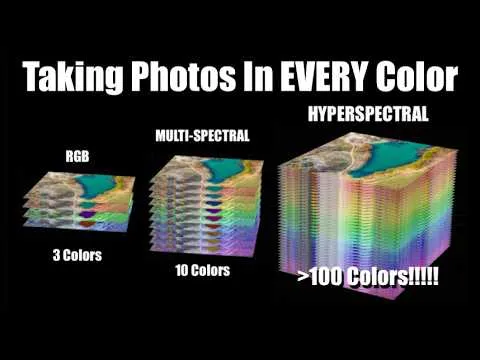

Your eyes see the world using three primary colors: red, green, and blue. Weather satellites typically use around 16 color bands to capture different atmospheric conditions. Now, new hyperspectral satellites are capturing hundreds of distinct color bands for every single point, or pixel, in an image. This allows them to see far beyond what human eyes can perceive.

Imagine trying to spot camouflaged military equipment. To us, green paint and green leaves might look similar. But to a hyperspectral imager, their ‘colors’ – how they reflect light across a wide spectrum – are vastly different. Just as increasing the detail in a picture lets you see smaller objects, increasing the detail in color, or spectral resolution, lets you see hidden features.

A Legacy of Spectral Analysis

The idea of analyzing light’s color spectrum isn’t new. Astronomers have used it for over 200 years to understand what stars and the universe are made of. In fact, the element helium was first discovered by its unique spectral signature in the sun’s atmosphere. The name ‘helium’ comes from ‘helios,’ the Greek word for sun.

Now, with advanced technology, scientists can apply these powerful spectral analysis techniques to nearly every pixel captured from space. This means we can survey Earth like never before, identifying healthy versus sick plants, spotting specific minerals in rocks, and detecting signs of human activity that were once nearly impossible to find without physically visiting a location.

From Lab to Orbit: The Evolution of Hyperspectral Imaging

Hyperspectral imaging itself is not a brand-new concept. NASA’s Jet Propulsion Laboratory (JPL) developed an early hyperspectral imager called AVIRIS back in the 1980s. This instrument flew on high-altitude NASA aircraft, like the ER-2 (a version of the U-2 spy plane). However, the technology of the 1980s meant this data was stored on tapes and took days to process after flights.

Today, miniaturized electronics, faster computers, and advanced communication systems make it possible to handle the massive amounts of data hyperspectral imaging generates. This has led to commercial satellite companies, such as Planet Labs with its Tanager satellite and Pixxel with its Firefly satellites, offering this advanced capability to a wider range of customers.

How Do We Capture Hundreds of Colors?

Capturing so much color information presents a unique technical challenge. A typical digital camera uses a ‘Bayer mask’ in front of its sensor. This mask has a pattern of red, green, and blue filters, allowing each pixel to record only one of those three colors. The camera then interpolates to create a full-color image.

To get hundreds of colors, you can’t simply use a more complex filter mask. Doing so would sacrifice spatial detail, making it hard to distinguish between changes in color and changes in brightness. Instead, many scientific imaging systems capture images one color band at a time, using high-quality filters that cover the entire sensor.

This process often involves rotating a wheel of different filters into place. While effective, it means taking multiple pictures sequentially, which can cause problems. If the target is moving, like a planet passing by a spacecraft, slight time differences between capturing each color band can create color ‘fringes’ around moving objects. The more color bands you need, the longer this takes and the more pronounced the fringing becomes.

Innovative Filter Technologies

To overcome these limitations, scientists and engineers have developed innovative technologies. One approach uses tunable filters, like liquid crystal tunable filters (LCTFs). These devices use layers of liquid crystals and other materials to precisely adjust the wavelength of light that passes through. This allows a single filter to rapidly change its ‘color’ setting, capturing many spectral bands without needing hundreds of separate physical filters.

Another method involves splitting light into different paths. Early high-quality video cameras used multiple sensors, each dedicated to red, green, or blue light, to capture more light and detail. Modern systems can use prisms and specialized filters to split incoming light into different spectral ranges – visible, near-infrared, and far-infrared – sending each to a separate sensor. While this is often called multispectral imaging (typically tens of bands), it shares principles with hyperspectral techniques.

Spectroscopy: Analyzing Light’s Fingerprint

Astronomers have long used tools like prisms and diffraction gratings to spread starlight into its component colors, creating a spectrum. A diffraction grating, a surface with tiny, precisely spaced lines, works like the surface of a CD or DVD, scattering light into a rainbow. By analyzing the bright and dark lines in a star’s spectrum, scientists can identify the elements present.

Applying this to Earth imaging requires a different approach. Instead of capturing the spectrum of a single star, we need to capture the spectrum of every pixel. One common technique for satellites is ‘push broom’ imaging. The satellite moves across the Earth’s surface, and a narrow strip of the ground is imaged line by line. As the light from each line passes through a diffraction grating or a specialized filter, it’s spread into its spectrum, creating a 2D image where one dimension is space and the other is color.

For example, Planet Labs’ Tanager satellite, with a resolution of 30-35 meters, captures 424 spectral bands from visible light into the infrared. Because it needs to collect enough light across so many narrow bands, it requires faster sensor readout speeds, similar to the high-speed slow-motion modes found on smartphones.

Snapshot Hyperspectral Imaging

While push broom imaging is efficient for satellites, researchers are also developing ‘snapshot’ hyperspectral cameras. These aim to capture a full 3D data cube (two spatial dimensions plus the spectral dimension) in a single exposure, without scanning.

Some designs use fiber optics to route light from each pixel to a spectrometer, effectively capturing the spectrum for every point simultaneously. Others use complex coded masks and advanced mathematical algorithms to reconstruct the spectral information from a single, seemingly scrambled image. These ‘computed tomography imaging spectrometers’ can capture a 3D spectral image from multiple angles, similar to how CAT scans work in medicine.

The Future of Seeing

These advanced hyperspectral imaging capabilities are opening up new frontiers. From monitoring crop health and managing land resources to identifying military assets, the applications are vast. The ability to see the world in hundreds of ‘impossible’ colors promises a deeper understanding of our planet and a more detailed view of the universe around us. As technology continues to advance, we can expect even more ingenious ways to capture and interpret the rich spectral tapestry of light.

By Scott Manley

Source: New Hyperspectral Satellites See 'Impossible' Color Details (YouTube)