Google’s AI Gallery Brings Powerful Models to Your Phone

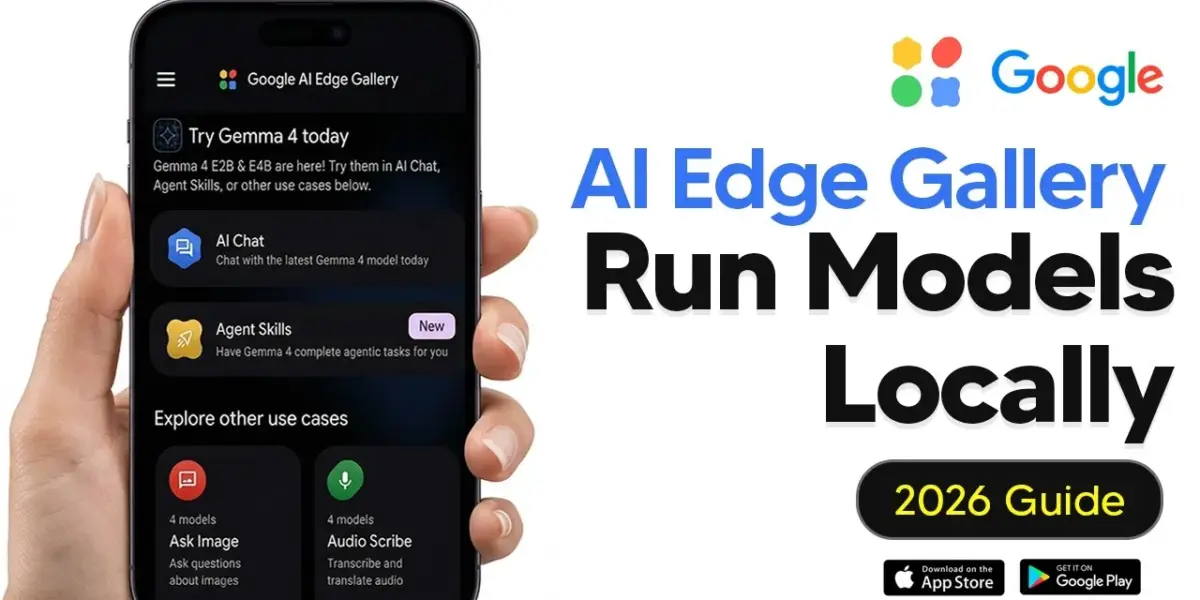

Google's new AI Edge Gallery app lets users run advanced AI models like Gemma directly on their smartphones for free and privately. The app supports AI chat, image analysis, audio transcription, and even experimental device control, making powerful AI accessible on the go.

Google’s AI Gallery Brings Powerful Models to Your Phone

Google has launched a new application called the AI Edge Gallery, allowing users to run advanced artificial intelligence models directly on their smartphones. This means you can use powerful AI tools privately, for free, and without needing an internet connection.

Easy Access for Everyone

Getting started with the AI Edge Gallery is straightforward. The app is available on both Android and iOS devices through their respective app stores. There’s no need to join a waitlist or have a special developer account. Simply download the app, and you’re ready to explore its features.

Understanding the AI Edge Gallery

The AI Edge Gallery acts as a hub for Google’s AI models. You can discover, download, and run these models directly on your phone. Think of it like running a large language model (LLM) on your computer, but now it’s on your mobile device. The app offers various modes, like ‘Ask Image’ for image-related tasks and ‘Audio Scribe’ for handling audio, each designed for specific AI functions.

AI Chat: Your Pocket Assistant

The most commonly used feature is likely the AI chat. This allows you to have conversations with an on-device AI model. You can download different versions of models, such as Google’s Gemma models. The performance you experience depends on your phone’s hardware.

Device Compatibility: What You Need

For Android users, if your phone has 8 GB of RAM or more and was released in the last few years, you can likely run the Gemma 4 models smoothly. Phones with 12 GB of RAM will handle them even better. Older phones with 4 to 6 GB of RAM might struggle with the larger Gemma 4 models.

On the iPhone side, the iPhone 15 Pro and newer models can run the AI models easily. iPads with M-series chips and 8-16 GB of RAM are also good options. For older iPhones like the 13 or 14 with standard 4-6 GB of RAM, they might work with smaller Gemma variants, but larger models are not recommended. Generally, having 8 GB of RAM or more is best, and it’s advised not to use devices older than an iPhone 12 or those with 3 GB of RAM or less.

Downloading and Running Models

Inside the AI chat section, you’ll see a list of available models. You can download as many as your phone can handle. Larger models generally offer more advanced reasoning abilities, while smaller models, like the Gemma 3 with 1 billion parameters, are suitable for older devices.

Once a model is downloaded, you can start a chat. The model needs a moment to initialize, usually about 10-15 seconds, before it’s ready. You can easily switch between different downloaded models through a dropdown menu.

Fine-Tuning Your AI Experience

The AI Edge Gallery offers settings to customize how the AI responds. The ‘Thinking’ option, which is off by default, allows the model more time to process complex, multi-step requests. For simple questions, it’s not necessary.

Temperature: This setting controls how random or creative the AI’s responses are. A balanced setting is good for general chat. Increasing it can lead to more creative but potentially nonsensical answers, while lowering it makes the responses more predictable and safe.

Top K and Top P: These settings influence the AI’s word choices. Top K limits the model to considering only the top ‘K’ most likely next words. Top P controls how safe or focused the AI’s choices are. For most users, the default settings are recommended, especially if you’re not a developer.

CPU vs. GPU: It’s best to use the GPU for AI tasks, as it’s significantly faster than the CPU and uses less battery, even though the CPU can also perform these tasks.

Privacy First: On-Device Processing

A key benefit of the AI Edge Gallery is privacy. All processing happens directly on your device, meaning your data and conversations are not sent to any servers. This keeps your information secure and private.

Exploring Agent Skills

Beyond basic chat, the ‘Agent Skills’ section lets you use predefined prompts to guide the AI. These skills are specific ways to prompt the model to perform certain instructions. You can find basic skills or even import your own from a URL or local file, allowing for more customized AI interactions.

For instance, you can import a skill to help generate video scripts in a specific documentary style. The app also supports multimodal models, meaning they can understand and process images and audio. You can upload a picture and ask the AI what it sees, or record audio and have the AI transcribe it.

Image and Audio Capabilities

The image features allow you to upload photos and ask the AI to describe them. This is useful when you need information about something but don’t have internet access. Similarly, the audio scribe function lets you record or upload audio files for transcription. While the audio feature can sometimes be a bit quirky, requiring a restart for new audio inputs, it generally works well for transcribing speech.

Mobile Actions: Controlling Your Device

An experimental feature called ‘Mobile Actions’ allows the AI to control certain functions on your phone. For example, you can ask the AI to turn your flashlight on or off. While still in early development, this hints at a future where AI can more seamlessly interact with and control your device’s features.

Prompts Lab: Streamlining Tasks

The ‘Prompts Lab’ offers a collection of preset prompts for common tasks. You can use it to summarize text, rewrite content in different tones (casual, friendly, polite), or even generate code snippets. This feature is helpful for quickly performing tasks without needing to craft complex prompts each time.

Why This Matters

The AI Edge Gallery democratizes access to powerful AI technology. By enabling on-device processing, it enhances privacy and makes AI accessible even without a constant internet connection. This opens up possibilities for a wide range of applications, from personal productivity tools to assistive technologies, all running efficiently and privately on your mobile device.

Source: Google AI Edge Gallery Tutorial – How To Run LLMS Locally On Your Phone (YouTube)