Social Media’s Grip: Are Kids Falling Prey to Digital Traps?

Citizens express deep concern over social media's addictive design and its impact on children's attention spans. They worry about exposure to inappropriate content and online predators, calling for accountability from social media giants. The immense profits these companies make should be matched by a commitment to protecting young users.

Social Media’s Grip: Are Kids Falling Prey to Digital Traps?

Many parents and citizens are worried about how social media affects children. They feel these platforms are designed to be addictive. The constant scrolling and quick updates can hook users, much like a slot machine provides a thrill. This design, they argue, can shorten attention spans and make it harder for kids to focus on other things. It’s like giving a child a constant stream of candy; they may not want to eat their vegetables anymore.

Concerns also rise about the content children are exposed to. Some worry about influencers promoting unhealthy behaviors like gambling or showing off wealth. These role models can pass on bad habits instead of positive ones. Then there’s the issue of inappropriate content, which can be beyond a child’s understanding or maturity level. This can include explicit material or even dangerous online spaces.

The pervasiveness of porn, the chat rooms that are within apps and within games where people prey on children. We’ve seen some of that firsthand.

The idea of online predators using these platforms to harm children is a grave concern. These chat rooms within games and apps can become dangerous places. People can take advantage of young users who might not understand the risks involved.

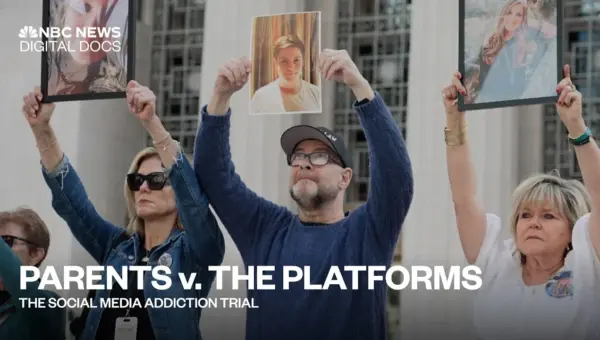

Holding Platforms Accountable

This leads to a crucial question: should social media companies be held responsible for these addictive features and potential harms? Some believe that if platforms facilitate dangerous activities, they must be accountable. If a company builds a road and it leads directly to a dangerous cliff, shouldn’t they take some responsibility for what happens there?

Others agree that more needs to be done to prevent children from accessing the darker parts of the internet. It’s not just about kids; even adults struggle with the internet’s downsides. The current safety measures might not be enough to protect vulnerable users.

There’s a strong feeling that those who put damaging content out into society should face consequences. This applies to both individual creators and the platforms that host them. Society needs to consider who is responsible when harmful material spreads widely.

The Profit Motive and Child Protection

Social media has become a massive industry, worth trillions of dollars. Companies like Meta (formerly Facebook) generate huge profits. Some argue that with such immense wealth comes a responsibility to protect the users who contribute to that success, especially children.

The core idea is that if these companies are making vast sums of money, they should invest in safeguarding their younger users. They have the resources to implement better safety features and prevent harm. It’s like a factory making a lot of money from its products; it should also spend money ensuring those products are safe for everyone, especially children.

Why This Matters

The discussion highlights a growing tension between the business models of social media companies and the well-being of young users. These platforms are designed to capture and hold attention, which is profitable but can come at a significant cost to mental health and development. The ease of access to potentially harmful content and the difficulty in protecting children online are serious issues that parents and society are grappling with.

Historical Context and Background

The rise of social media in the early 2000s promised connection and community. Platforms like MySpace and then Facebook aimed to bring people together online. However, as these platforms grew, their focus shifted towards maximizing user engagement for advertising revenue. This led to the development of algorithms designed to keep users scrolling, often by showing them content that elicits strong emotional responses. This business model, while profitable, has increasingly drawn criticism for its potential negative impacts, especially on younger, more impressionable users.

Implications, Trends, and Future Outlook

The concerns raised in these conversations are driving calls for greater regulation and corporate responsibility. We are seeing trends towards parents seeking more control over their children’s online activities, using parental controls and limiting screen time. There’s also a growing demand for social media companies to be more transparent about their algorithms and to implement stronger content moderation and age verification systems.

The future likely holds more debate and potentially new laws aimed at protecting children online. Companies may face increased pressure to redesign their platforms to be less addictive and safer. This could involve features that encourage breaks, limit exposure to certain content, or provide more robust tools for parents. However, the immense profitability of the current model means that companies will likely resist changes that could impact their revenue. The challenge will be finding a balance between innovation, profit, and the fundamental need to protect vulnerable populations.

Source: Citizens Weigh in on Social Media Addiction in Children (YouTube)