Nvidia Unveils AI Graphics Tech, Sparks ‘Beauty Filter’ Debate

Nvidia's new DLSS5 technology uses AI to reimagine game lighting and materials, aiming for photorealism. While impressive, it has sparked controversy, with critics calling it an "AI beautifier" that alters character appearances. The debate highlights a growing tension over AI's role in game development and artistic control.

Nvidia Unveils AI Graphics Tech, Sparks ‘Beauty Filter’ Debate

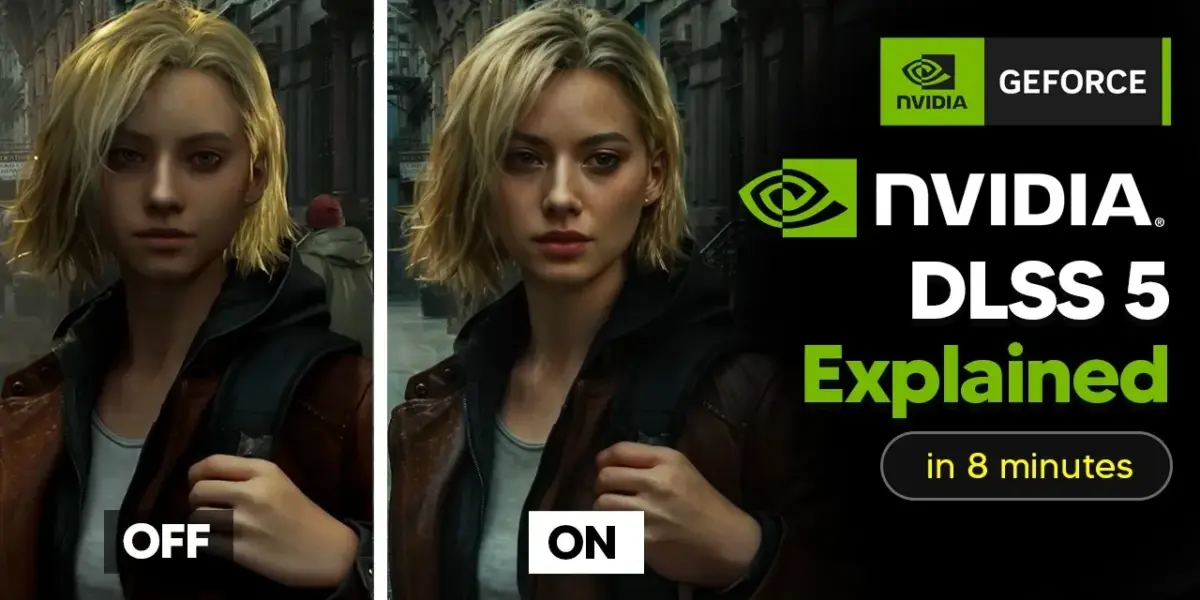

On March 7th, 2026, Nvidia showcased a stunning demo of the game Resident Evil Aquam. The audience noticed something striking about the character Grace Ashcroft on screen. Her lips appeared fuller, her cheekbones sharper, and her makeup seemed different. This wasn’t a game update; Nvidia had run the game through a new AI model. Within minutes, the term “AI beautifier” began trending online.

This new technology is called DLSS5. Nvidia CEO Jensen Huang described it as the “GPT moment for graphics.” It was revealed at GTC 2026 and is being called the biggest leap in computer graphics since real-time ray tracing arrived in 2018. However, many on the internet are comparing it to an Instagram beauty filter for video games.

What DLSS5 Actually Does

So, what is DLSS5 doing differently? It’s not just upscaling images or creating extra frames to make games run smoother, which is what previous versions of DLSS did. Instead, DLSS5 is a neural rendering model. It takes each frame generated by the game engine and completely re-imagines the lighting and materials within the game. The goal is to make everything look more realistic, like a photograph.

This is the first time Nvidia has used AI not just to improve game performance, but to fundamentally change how a game looks. This is precisely why it has become a point of controversy.

How DLSS5 Works

Let’s look closer at the technology. Previous DLSS versions focused on efficiency. DLSS Super Resolution would render games at a lower resolution and use AI to make the image sharper. DLSS Frame Generation would create new, intermediate frames to boost smoothness. Both aimed to make the same game run faster. DLSS5, however, does something entirely different.

Here’s the DLSS5 process. First, the game engine renders a frame as usual. This includes all the shapes, textures, lighting, and details. It then outputs two main things: the color buffer, which is the actual image you see, and motion vectors. Motion vectors track how each pixel moves between frames.

DLSS5 takes these two inputs. A special neural rendering model, trained on Nvidia’s supercomputers, then analyzes the frame. Critically, the model doesn’t just look at the pixels. Nvidia states it understands the entire scene. It recognizes characters, fabrics, hair, and even subtle skin tones. It reads the environmental lighting, figuring out if the scene is lit from the front, back, or is under an overcast sky, all from a single image.

Based on this understanding, it generates a new version of the frame. This new frame aims to have photorealistic lighting and materials as the AI believes they should look. Think of it like this: ray tracing shows where light should go, accurately placing shadows and reflections. DLSS5 takes this further by trying to make those lighting interactions look like they do in real life. This includes effects like subsurface scattering on skin, which gives flesh a warm, translucent quality. It also aims to capture the delicate sheen on fabric and the way individual strands of hair catch and scatter light. These are effects that even advanced ray tracing struggles to do convincingly in real-time because they require immense computing power.

DLSS5 tries to figure out these complex lighting effects using its AI model. The final output is still tied to the original 3D content. It doesn’t create new shapes or replace textures. Instead, it applies a neural rendering pass on top of what the game engine already created. This keeps the structure and movement of the original scene the same while transforming how light interacts with surfaces.

Hardware Requirements and Initial Concerns

At GTC, Nvidia demonstrated DLSS5 in four games: Hogwarts Legacy, Starfield, Oblivion Remastered, and others. However, a key detail emerged: the demo required two high-end RTX 5090 graphics cards. One card rendered the game, while the second was dedicated solely to running the DLSS5 neural model. Each of these cards costs around $2,000.

Nvidia plans to optimize DLSS5 to run on a single GPU by its full launch. But currently, it’s a two-GPU technology needing roughly $4,000 worth of hardware. This brings us to the controversy and the immediate, strong backlash.

The Backlash: An AI Beauty Filter?

Why are people upset? PC gamer Tyler McVicker reviewed Nvidia’s demo frame by frame. He concluded that Grace Ashcroft’s face in Resident Evil Aquam wasn’t just lit more realistically; the facial features themselves were changed. He noted fuller lips and sharper cheekbones. He called it an “apparent bias for certain beauty standards trained into the AI model.” The term that stuck was “beautification,” referencing the trend where AI tools make everyone look like a filtered Instagram model.

Will Smith, co-founder of Tested, posted on Blue Sky: “Nvidia, what if we introduce ray tracing so you can have really high quality real-time lighting? Also, Nvidia, what if we throw out that lighting to run the beautifier filter so everyone looks hot?”

The deeper concern goes beyond just individual faces. For years, DLSS was seen as a helpful addition. It improved game performance without altering the game’s visual appearance. DLSS5 appears to break that promise. Nvidia has responded, stating that developers have full artistic control. They can use intensity sliders, color grading, and regional adjustments through Nvidia’s Streamline framework to fine-tune how DLSS5 effects are applied. Bethesda confirmed that DLSS5 integration in Starfield and Oblivion Remastered is entirely managed by their artists. Importantly, DLSS5 can be turned on or off by the player.

Modding Concerns and Industry Trends

However, Digital Foundry raised another point. Because DLSS5 uses Streamline, the same system used for switching between DLSS versions, they worry that players might force DLSS5 onto games not designed for it. In this scenario, official developer controls might become irrelevant if modders bypass them.

This backlash, while immediate, is part of a larger trend in the gaming industry. DLSS5 isn’t just a single experiment. At CES 2026, Nvidia CEO Jensen Huang declared, “The future is neural rendering.” Meanwhile, Google’s DeepMind has shown off Gen 3, a world model that can generate entire interactive 3D environments from text prompts in real-time. While currently limited in consistency, this points towards a future where entire game worlds could be generated by AI.

DLSS5 appears to be a step in this direction. It doesn’t replace the entire game engine; the game still renders its core elements. DLSS5 adds a neural enhancement on top, creating a hybrid experience of traditional rendering mixed with generative AI.

If Nvidia’s predictions hold true and the models continue to improve as rapidly as DLSS upscaling has over the past six years, current versions might seem basic compared to what will be available in two to three years. The list of games already supporting DLSS5 shows the industry is taking this seriously. Companies like Bethesda, Capcom, Ubisoft, Tencent, and Warner Bros. Games are on board. Over a dozen titles are announced for DLSS5 at launch this fall, including Assassin’s Creed Shadows, Phantom Blade, Resident Evil, and Starfield. These are major releases from the biggest publishers.

Why This Matters

The timing of DLSS5’s announcement is notable. It’s March 2026, and the debate around AI is at its peak. Artists are raising concerns about generative AI in legal battles, studios, and on social media. The gaming community has spent years pushing back against AI-generated content in games. In this climate, Nvidia revealed a technology that some see as an AI filter, changing how game characters look, and called it the “GPT moment for graphics.” The messaging could have been better.

The technology itself is impressive. Hands-on reviews suggest it’s genuinely remarkable when used appropriately. PC Mag’s reviewer called it the most lifelike gaming graphics encountered. Tom’s Hardware found it exciting, though they cautioned about the facial changes. Even critics acknowledge that improvements in environmental lighting, material details, and subsurface scattering represent a real leap forward.

The real conflict isn’t about whether neural rendering works – it clearly does. The core issue is who gets to control the final look of the games: the developers, the players, or the AI itself.

Source: DLSS 5 Explained Clearly In 8 Minutes (How It Actually Works) (YouTube)